The MERRA-2 database provides a web service to download the requested information, however this system is not flexible enough to download a big amount of information. According to their instructions, wget can be used to download the files once a file list is generated. The problem is that for certain cases, like mine, a complete dataset will rquire around 15k requests (one file per day since 1980). Here I document the procedure I followed to organize and download the data.

Create script to download and rename the data.

The file list generated by the database will look like this.

https://goldsmr4.gesdisc.eosdis.nasa.gov/data/MERRA2/M2I1NXASM.5.12.4/doc/MERRA2.README.pdf

https://goldsmr4.gesdisc.eosdis.nasa.gov/daac-bin/OTF/HTTP_services.cgi?FILENAME=%2Fdata%2FMERRA2%2FM2I1NXASM.5.12.4%2F1980%2F01%2FMERRA2_100.inst1_2d_asm_Nx.19800101.nc4&FORMAT=bmM0Lw&BBOX=22.86%2C-98.617%2C25.497%2C-96.749&LABEL=MERRA2_100.inst1_2d_asm_Nx.19800101.SUB.nc&SHORTNAME=M2I1NXASM&SERVICE=L34RS_MERRA2&VERSION=1.02&DATASET_VERSION=5.12.4&VARIABLES=PS%2CQV10M%2CQV2M%2CSLP%2CT10M%2CT2M%2CU10M%2CU2M%2CU50M%2CV10M%2CV2M%2CV50M

https://goldsmr4.gesdisc.eosdis.nasa.gov/daac-bin/OTF/HTTP_services.cgi?FILENAME=%2Fdata%2FMERRA2%2FM2I1NXASM.5.12.4%2F1980%2F01%2FMERRA2_100.inst1_2d_asm_Nx.19800102.nc4&FORMAT=bmM0Lw&BBOX=22.86%2C-98.617%2C25.497%2C-96.749&LABEL=MERRA2_100.inst1_2d_asm_Nx.19800102.SUB.nc&SHORTNAME=M2I1NXASM&SERVICE=L34RS_MERRA2&VERSION=1.02&DATASET_VERSION=5.12.4&VARIABLES=PS%2CQV10M%2CQV2M%2CSLP%2CT10M%2CT2M%2CU10M%2CU2M%2CU50M%2CV10M%2CV2M%2CV50M

https://goldsmr4.gesdisc.eosdis.nasa.gov/daac-bin/OTF/HTTP_services.cgi?FILENAME=%2Fdata%2FMERRA2%2FM2I1NXASM.5.12.4%2F1980%2F01%2FMERRA2_100.inst1_2d_asm_Nx.19800103.nc4&FORMAT=bmM0Lw&BBOX=22.86%2C-98.617%2C25.497%2C-96.749&LABEL=MERRA2_100.inst1_2d_asm_Nx.19800103.SUB.nc&SHORTNAME=M2I1NXASM&SERVICE=L34RS_MERRA2&VERSION=1.02&DATASET_VERSION=5.12.4&VARIABLES=PS%2CQV10M%2CQV2M%2CSLP%2CT10M%2CT2M%2CU10M%2CU2M%2CU50M%2CV10M%2CV2M%2CV50M

https://goldsmr4.gesdisc.eosdis.nasa.gov/daac-bin/OTF/HTTP_services.cgi?FILENAME=%2Fdata%2FMERRA2%2FM2I1NXASM.5.12.4%2F1980%2F01%2FMERRA2_100.inst1_2d_asm_Nx.19800104.nc4&FORMAT=bmM0Lw&BBOX=22.86%2C-98.617%2C25.497%2C-96.749&LABEL=MERRA2_100.inst1_2d_asm_Nx.19800104.SUB.nc&SHORTNAME=M2I1NXASM&SERVICE=L34RS_MERRA2&VERSION=1.02&DATASET_VERSION=5.12.4&VARIABLES=PS%2CQV10M%2CQV2M%2CSLP%2CT10M%2CT2M%2CU10M%2CU2M%2CU50M%2CV10M%2CV2M%2CV50M

https://goldsmr4.gesdisc.eosdis.nasa.gov/daac-bin/OTF/HTTP_services.cgi?FILENAME=%2Fdata%2FMERRA2%2FM2I1NXASM.5.12.4%2F1980%2F01%2FMERRA2_100.inst1_2d_asm_Nx.19800105.nc4&FORMAT=bmM0Lw&BBOX=22.86%2C-98.617%2C25.497%2C-96.749&LABEL=MERRA2_100.inst1_2d_asm_Nx.19800105.SUB.nc&SHORTNAME=M2I1NXASM&SERVICE=L34RS_MERRA2&VERSION=1.02&DATASET_VERSION=5.12.4&VARIABLES=PS%2CQV10M%2CQV2M%2CSLP%2CT10M%2CT2M%2CU10M%2CU2M%2CU50M%2CV10M%2CV2M%2CV50M

https://goldsmr4.gesdisc.eosdis.nasa.gov/daac-bin/OTF/HTTP_services.cgi?FILENAME=%2Fdata%2FMERRA2%2FM2I1NXASM.5.12.4%2F1980%2F01%2FMERRA2_100.inst1_2d_asm_Nx.19800106.nc4&FORMAT=bmM0Lw&BBOX=22.86%2C-98.617%2C25.497%2C-96.749&LABEL=MERRA2_100.inst1_2d_asm_Nx.19800106.SUB.nc&SHORTNAME=M2I1NXASM&SERVICE=L34RS_MERRA2&VERSION=1.02&DATASET_VERSION=5.12.4&VARIABLES=PS%2CQV10M%2CQV2M%2CSLP%2CT10M%2CT2M%2CU10M%2CU2M%2CU50M%2CV10M%2CV2M%2CV50M

Where each line is a day of data. So, I created a simple script that will do the following:

- Download the file.

- Rename the file to YYYYMMDD.nc4

The script created:

#!/bin/bash

filelist=$1

while read line; do

# Get date from URL

filename=$(echo $line | cut -d "?" -f 2 | cut -d "." -f 6).nc4

echo "Downloading $filename"

wget -q --load-cookies ~/.urs_cookies \

--save-cookies ~/.urs_cookies \

--auth-no-challenge=on \

--keep-session-cookies \

--content-disposition $line -O data/$filename

# Check if file exists

if [ ! -f data/$filename ]; then

echo "ERROR: $filename not downloaded!!!"

fi

done < $filelist

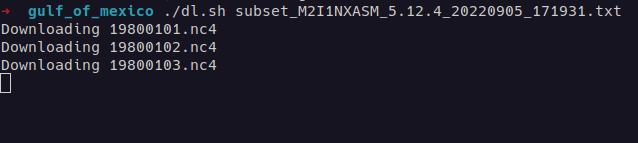

The download process will look like this: